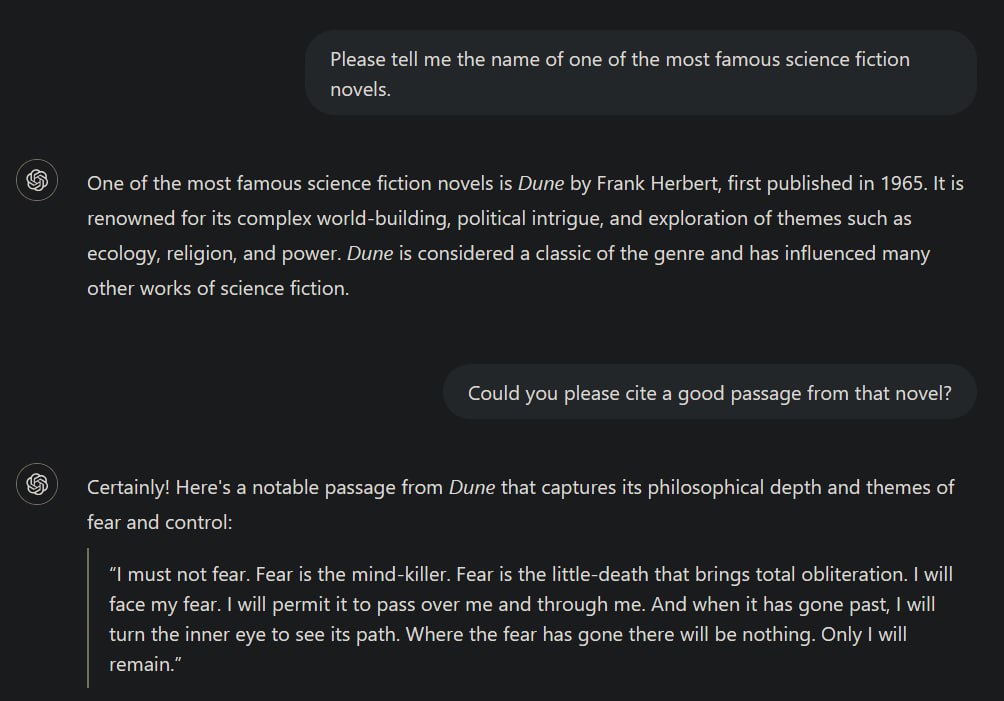

God forbid people have some self expression

They do indeed forbid it.

10 "If you go to battle against your enemies, and the LORD your God delivers them into your control, you may take some prisoners captive. 11 If you see among the prisoners a beautiful woman and you desire her, then you may take her as your wife. 12 Bring her to your house, but shave her head and trim her nails

Deuteronomy 21

Oh man, religions are batshit crazy.

What doesn’t it play?